September 28th, 2008

Clojure

I know I’ve mentioned Clojure now and again in this blog, but I haven’t actually talked that much about it. I feel it’s time to change that right now – Clojure is in the air and it’s looking really interesting. More and more people are talking about it, and after the great presentation Rich gave at the JVM language summit I feel that there might be some more converts in the world.

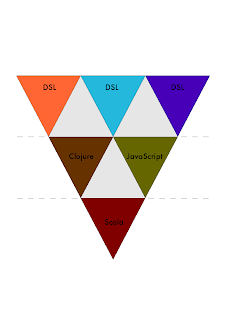

So what is it? Well, a new Lisp dialect for the JVM. It was originally targeting both the JVM and .NET but Rich ended up not going through with that (a decision I can understand after seeing the efforts Fan have to expend to continue providing this feature).

It’s specifically not an implementation of either Common Lisp nor Scheme, but instead a totally new language that’s got some interesting features. The most striking feature of it is the way it embraces functional programming. In comparison to Common Lisp who I characterize as being a multiparadigm language, Clojure has a heavy bent towards functional programming. This includes a focus on immutable data structures and support for good concurrency models. He’s even got an implementation of STM in there, which is really cool.

So what do I think about it? First of all, it’s definitely a very interesting language. It’s also taken the ideas of Lisp and twisting them a bit, adding some new ideas and refining some old ones. If I wanted to do concurrency programming for the JVM I would probably lean more towards Clojure than Scala, for example.

All that said, I am in two minds about the language. It is definitely extremely cool and it looks very useful. The libraries specifically have lots to say for them. But the other side of it for me is from the point of Lisp purity. One of the things I really like about Lisps is that they are very simple. The syntax is extremely small and in most cases everything will just be either lists or atoms and nothing else. Common Lisp can handle other syntax with reader macros – which end up with results that are still only lists and atoms. This is extremely powerful. Clojure has this to a degree, but adds several basic composite data structures that are not lists, such as sets, arrays and maps. From a pragmatic standpoint I can understand that, but the fact that they are basic syntax instead of reader macros mean that if I want to process Clojure code I will end up having to work with several kinds of composite data structures instead of just one.

This might seem like a small thing, and it’s definitely not something that would stop me from using the language. But the Lisp lover in me cringes a bit at this decision.

All in all Clojure is really cool and I recommend people to take a look at it. It’s getting lots of attention and people are writing about it. Stu Halloway is currently in the process of porting Practical Common Lisp to Clojure, and I recently saw a blog post about someone porting On Lisp to Clojure, so there is absolutely an interest in it. The question is how this will continue. As I’ve started saying more and more: these are interesting times for language geeks.